~~~ Algo Trading: Pitfalls of Backtesting ~~~

Backtests are needed because there's no closed algorithm or formula that could calculate the expected performance of a strategy. The only way to find out with certainty is trading it for a couple years. However, we usually want to know the expected performance beforehand - particularly, whether the system is profitable at all. For this purpose we run it with a couple years of historical price data and check the result. Below a chart produced by a typical backtest, the profit represented by the blue bars:

The obvious problem: Historical data lies in the past, while we want to know the strategy performance with future live data. Since markets can change, past data is not always a good proxy of future data. We cannot avoid, but work around this problem. There are clever algorithms that can permanently observe live trading performance, compare it with the expected backtest performance, and raise the alarm (or pull the plug) when both deviate too much. The Cold Blood Index is an excellent algorithm for that. It's implemented in Zorro and automatically applied to live trading when backtest data is available. There are many other requirements for a serious backtest that produces really usable information about future live trading performance: Out-of-sample data usage. When a strategy was trained or optimized with historical data, it is mandatory that the backtest uses a different data set. Otherwise, when testing with the very same data on which the strategy was optimized (in-sample data), the backtest is biased and its result way too optimistic and useless. This effect is called overfitting. The usual way to avoid overfitting bias and still use a representative data set for the backtest is walk forward analysis, which is supported by most serious backtest tools.

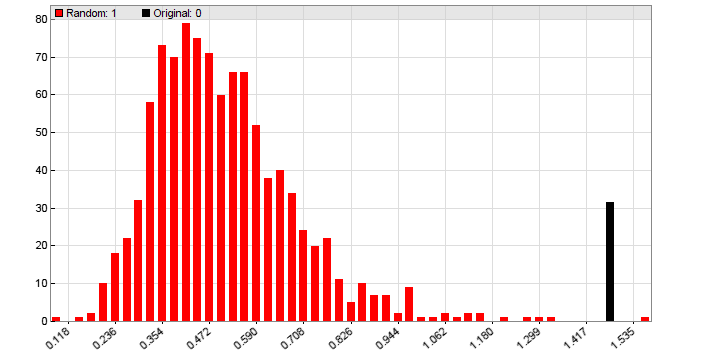

Accuracy. Every broker or exchange has its own individual account parameter set, such as leverage, swaps or interest, commision, contract size, market hours, and symbols that are specific to any traded asset. Most of these parameters can largely affect the backtest result. A backtest can therefore not produce a 'general result', but must consider the very account that will be used for live trading. For this purpose, Zorro supports account-specific sets of asset parameters for usage in backtests. They can be either set up by the user, or are automatically loaded from the broker API (if available) and determine the individual account properties. Speed. Developing an algo trading strategy involves backtesting it all the time for determining the ideal algorithm to exploit the targeted market inefficiency. For this the backtest can't be fast enough. It makes a difference if a backtest needs a few seconds, as with Zorro, or 10 minutes, as with some trading platforms. Optimizing or training algorithms run backtests many thousand times for determining the best suited parameter set or for training machine learning models. And a final Reality Check (see below) even trains and optimizes several hundred times with different price curves. All this requires extremely speedy backtests. Which is the reason why Zorro uses a highly optimized backtest module and the fastest available language, C, for its algo trading scripts. Detailedness. A backtest should not only reveal the profit of the backtest period. When comparing alternative algorithms, other parameters are more important, such as Sharpe ratio, equity curve linearity, Montecarlo drawdown simulation, or portfolio analysis. The algo with the highest profit is usually not the best. Dependent on the strategy, it can also be necessary to derive individual metrics from the trade history or equity curve, and add them to the backtest result. In short, a serious performance report can't be detailed enough. There's a problem, though.... When can we trust the backtest?Suppose we have developed an algorithmic trading system, which passed a walk-forward backtest with flying colors. Can we now be sure to achieve the same profit in real trading? Unfortunately not. Even when testing with out-of-sample data, and even with no training or optimization at all, backtest bias is likely still present. If not parameters, then algorithms, trading rules, setups, and assets are normally selected or rejected due to their backtest performance. This effect is hard to avoid in the development process of an algorithmic trading system. It is the reason why backtest results can be unreliable even when we have followed all rules and even when the markets have not changed. Therefore any backtest should be always verified with a Reality Check for finding out if we can trust the test. The purpose of a Reality Check is determining whether the strategy really exploits a market inefficiency, or has just been adapted to historical data by unvoluntary backtest bias, and will thus fail in live trading. There are several reality check methods, such as White's Reality Check, which are all based on a Montecarlo analysis with randomized price data or randomized equity curves. Zorro comes with a reality check framework that can be applied to any algo trading strategy. It runs up to 1000 training and test cycles with randomized prices, and generates a histogram like this one:

The black bar represents the backtest result with a real price curve, the red bars are the results with randomized prices. Randomizing eliminates almost all market inefficiencies from the price curve. When training the strategy on real data produced a significantly better result than 95% of the training runs with randomized data, we assume that the tested algo trading system really has some edge. It exploits a real existing market inefficiency and is likely to generate profit in live trading. This is the case for the system of the above histogram.

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||